Miloš Hašan

milos dot hasan at gmail dot com

I am currently a Principal Scientist at Adobe Research in San Jose. Before joining Adobe, I was Principal Engineer at Autodesk in San Francisco (2012-2018), a postdoc at UC Berkeley (2010-2012, advised by Ravi Ramamoorthi), and a postdoc at Harvard University (2009-2010, with Hanspeter Pfister). I received my Ph.D. in Computer Science from Cornell in August 2009, under the supervision of Kavita Bala. I am interested in computer graphics and many related topics.Selected publications

|

RNA: Relightable Neural Assets Krishna Mullia, Fujun Luan, Xin Sun, Miloš Hašan ACM Transactions on Graphics 2024 [project] [PDF] |

|

Neural Product Importance Sampling via Warp Composition Joey Litalien, Miloš Hašan, Fujun Luan, Krishna Mullia, Iliyan Georgiev SIGGRAPH Asia 2024 [project] [PDF] |

|

Procedural Material Generation with Reinforcement Learning Beichen Li, Yiwei Hu, Paul Guerrero, Miloš Hašan, Liang Shi, Valentin Deschaintre, Wojciech Matusik SIGGRAPH Asia 2024 [project] |

|

RGB↔X: Image Decomposition and Synthesis Using Material- and Lighting-aware Diffusion Models Zheng Zeng, Valentin Deschaintre, Iliyan Georgiev, Yannick Hold-Geoffroy, Yiwei Hu, Fujun Luan, Ling-Qi Yan, Miloš Hašan SIGGRAPH 2024 [project] [PDF] |

|

TexSliders: Diffusion-Based Texture Editing in CLIP Space Julia Guerrero-Viu, Miloš Hašan, Arthur Roullier, Midhun Harikumar, Yiwei Hu, Paul Guerrero, Diego Gutierrez, Belen Masia, Valentin Deschaintre SIGGRAPH 2024 [project] [PDF] |

|

Woven Fabric Capture with a Reflection-Transmission Photo Pair Yingjie Tang, Zixuan Li, Miloš Hašan, Jian Yang, Beibei Wang SIGGRAPH 2024 [project] [PDF] |

|

Precomputed Dynamic Appearance Synthesis and Rendering Yaoyi Bai, Miloš Hašan, Ling-Qi Yan EGSR 2024 [PDF] |

|

MCNeRF: Monte Carlo Rendering and Denoising for Real-Time NeRFs Kunal Gupta, Miloš Hašan, Zexiang Xu, Fujun Luan, Kalyan Sunkavalli, Xin Sun, Manmohan Chandraker, Sai Bi SIGGRAPH Asia 2023 [project] [PDF] [video] |

|

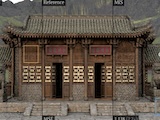

PSDR-Room: Single Photo to Scene using Differentiable Rendering Kai Yan, Fujun Luan, Miloš Hašan, Thibault Groueix, Valentin Deschaintre, and Shuang Zhao SIGGRAPH Asia 2023 [project] [PDF] |

|

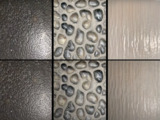

PhotoMat: A Material Generator Learned from Single Flash Photos Xilong Zhou, Miloš Hašan, Valentin Deschaintre, Paul Guerrero, Yannick Hold-Geoffroy, Kalyan Sunkavalli, and Nima Khademi Kalantari SIGGRAPH 2023 [project] [PDF] [video] |

|

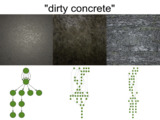

Generating Procedural Materials from Text or Image Prompts Yiwei Hu, Paul Guerrero, Miloš Hašan, Holly Rushmeier, Valentin Deschaintre SIGGRAPH 2023 [project] [PDF] |

|

Neural Biplane Representation for BTF Rendering and Acquisition Jiahui Fan, Beibei Wang, Miloš Hašan, Jian Yang, Ling-Qi Yan SIGGRAPH 2023 [project] [PDF] |

|

NeuSample: Importance Sampling for Neural Materials Bing Xu, Liwen Wu, Miloš Hašan, Fujun Luan, Iliyan Georgiev, Zexiang Xu, Ravi Ramamoorthi SIGGRAPH 2023 [project] [PDF] [arXiv] [video] |

|

Controlling Material Appearance by Examples Yiwei Hu, Miloš Hašan, Paul Guerrero, Holly Rushmeier, Valentin Deschaintre EGSR 2022 [project] [PDF] |

|

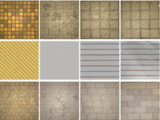

MatFormer: A Generative Model for Procedural Materials Paul Guerrero, Miloš Hašan, Kalyan Sunkavalli, Radomír Měch, Tamy Boubekeur, Niloy Mitra SIGGRAPH 2022 [project] [PDF] |

|

Rendering Neural Materials on Curved Surfaces Alexandr Kuznetsov, Xuezheng Wang, Krishna Mullia, Fujun Luan, Zexiang Xu, Miloš Hašan, Ravi Ramamoorthi SIGGRAPH 2022 [project] [PDF] [video] |

|

TileGen: Tileable, Controllable Material Generation and Capture Xilong Zhou, Miloš Hašan, Valentin Deschaintre, Paul Guerrero, Kalyan Sunkavalli, Nima Kalantari SIGGRAPH Asia 2022 [project] [PDF] |

|

Node Graph Optimization Using Differentiable Proxies Yiwei Hu, Paul Guerrero, Miloš Hašan, Holly Rushmeier, Valentin Deschaintre SIGGRAPH 2022 [project] [PDF] |

|

Differentiable Rendering of Neural SDFs through Reparameterization Sai Praveen Bangaru, Michaël Gharbi, Tzu-Mao Li, Fujun Luan, Kalyan Sunkavalli, Miloš Hašan, Sai Bi, Zexiang Xu, Gilbert Bernstein, Frédo Durand SIGGRAPH Asia 2022 [project] [PDF] |

|

Neural Layered BRDFs Jiahui Fan, Beibei Wang, Miloš Hašan, Jian Yang, Ling-Qi Yan SIGGRAPH 2022 [project] [PDF] [video] |

|

Woven Fabric Capture from a Single Photo Wenhua Jin, Beibei Wang, Miloš Hašan, Yu Guo, Steve Marschner, Ling-Qi Yan SIGGRAPH Asia 2022 [project] [PDF] [video] |

|

PhotoScene: Photorealistic Material and Lighting Transfer for Indoor Scenes Yu-Ying Yeh, Zhengqin Li, Yannick Hold-Geoffroy, Rui Zhu, Zexiang Xu, Miloš Hašan, Kalyan Sunkavalli, Manmohan Chandraker CVPR 2022 [project] [arXiv] [video] |

|

Physically-Based Editing of Indoor Scene Lighting from a Single Image Zhengqin Li, Jia Shi, Sai Bi, Rui Zhu, Kalyan Sunkavalli, Miloš Hašan, Zexiang Xu, Ravi Ramamoorthi, Manmohan Chandraker CVPR 2022 [project] [arXiv] |

|

SpongeCake: A Layered Microflake Surface Appearance Model Beibei Wang, Wenhua Jin, Miloš Hašan, Ling-Qi Yan ACM Transactions on Graphics 2022 [project] [PDF] |

|

NeuMIP: Multi-Resolution Neural Materials Alexandr Kuznetsov, Krishna Mullia, Zexiang Xu, Miloš Hašan, Ravi Ramamoorthi SIGGRAPH 2021 [project] [PDF] [video] |

|

Neural Complex Luminaires: Representation and Rendering Junqiu Zhu, Yaoyi Bai, Zilin Xu, Steve Bako, Edgar Velázquez-Armendáriz, Lu Wang, Pradeep Sen, Miloš Hašan, Ling-Qi Yan SIGGRAPH 2021 [paper] [video] |

|

OpenRooms: An Open Framework for Photorealistic Indoor Scene Datasets Zhengqin Li, Ting-Wei Yu, Shen Sang, Sarah Wang, Meng Song, Yuhan Liu, Yu-Ying Yeh, Rui Zhu, Nitesh Gundavarapu, Jia Shi, Sai Bi, Zexiang Xu, Hong-Xing Yu, Kalyan Sunkavalli, Miloš Hašan, Ravi Ramamoorthi, Manmohan Chandraker CVPR 2021 (Oral presentation) [project] [PDF] [video] |

|

NeuTex: Neural Texture Mapping for Volumetric Neural Rendering Fanbo Xiang, Zexiang Xu,Miloš Hašan, Yannick Hold-Geoffroy, Kalyan Sunkavalli, Hao Su CVPR 2021 (Oral presentation) [project] [arXiv] [video] |

|

MATch: Differentiable Material Graphs for Procedural Material Capture Liang Shi, Beichen Li, Miloš Hašan, Kalyan Sunkavalli, Tamy Boubekeur, Radomír Měch, Wojciech Matusik SIGGRAPH Asia 2020 [project] [PDF] [video] |

|

MaterialGAN: Reflectance Capture using a Generative SVBRDF Model Yu Guo, Cameron Smith, Miloš Hašan, Kalyan Sunkavalli, and Shuang Zhao SIGGRAPH Asia 2020 [project] [PDF] [video] |

|

A Bayesian Inference Framework for Procedural Material Parameter Estimation Yu Guo, Miloš Hašan, Ling-Qi Yan, Shuang Zhao Pacific Graphics 2020 (Computer Graphics Forum) [project] [PDF] [supplement] |

|

Path Cuts: Efficient Rendering of Pure Specular Light Transport Beibei Wang, Miloš Hašan, Ling-Qi Yan SIGGRAPH Asia 2020 [PDF] [video] |

|

Deep Reflectance Volumes: Relightable Reconstructions from Multi-View Photometric Images Sai Bi, Zexiang Xu, Kalyan Sunkavalli, Miloš Hašan, Yannick Hold-Geoffroy, David Kriegman, Ravi Ramamoorthi ECCV 2020 [arXiv] [PDF] |

|

Neural Reflectance Fields for Appearance Acquisition Sai Bi, Zexiang Xu, Pratul Srinivasan, Ben Mildenhall, Kalyan Sunkavalli, Miloš Hašan, Yannick Hold-Geoffroy, David Kriegman, Ravi Ramamoorthi [arXiv] [PDF] |

|

Example-Based Microstructure Rendering with Constant Storage Beibei Wang, Miloš Hašan, Nicolas Holzschuch, Ling-Qi Yan ACM Transactions on Graphics, 2020 [PDF] [video] |

|

Learning Generative Models for Rendering Specular Microgeometry Alexandr Kuznetsov, Miloš Hašan, Zexiang Xu, Ling-Qi Yan, Bruce Walter, Nima Khademi Kalantari, Steve Marschner, Ravi Ramamoorthi SIGGRAPH Asia 2019 [PDF] [video] |

|

Position-Free Monte Carlo Simulation for Arbitrary Layered BSDFs Yu Guo, Miloš Hašan, Shuang Zhao SIGGRAPH Asia 2018 [project] [PDF] [video] [code] |

|

Rendering Specular Microgeometry with Wave Optics Ling-Qi Yan, Miloš Hašan, Bruce Walter, Steve Marschner, Ravi Ramamoorthi SIGGRAPH 2018 [PDF] [video] [supplementary] |

|

Simulating the structure and texture of solid wood Albert Liu, Zhao Dong, Miloš Hašan, Steve Marschner SIGGRAPH Asia 2016 [PDF] [video] [supplementary] |

|

Position-Normal Distributions for Efficient Rendering of Specular Microstructure Ling-Qi Yan, Miloš Hašan, Steve Marschner, Ravi Ramamoorthi SIGGRAPH 2016 [PDF] [video] |

|

Rendering Glints on High-Resolution Normal-Mapped Specular Surfaces Ling-Qi Yan*, Miloš Hašan*, Wenzel Jakob, Jason Lawrence, Steve Marschner, Ravi Ramamoorthi (* dual first authors) SIGGRAPH 2014 [PDF] [video] [supplementary] |

|

Discrete Stochastic Microfacet Models Wenzel Jakob, Miloš Hašan, Ling-Qi Yan, Jason Lawrence, Ravi Ramamoorthi, Steve Marschner SIGGRAPH 2014 [PDF] [video] [project page] |

|

Progressive Light Transport Simulation on the GPU: Survey and Improvements Tomáš Davidovič, Jaroslav Křivánek, Miloš Hašan, Philipp Slusallek ACM Transactions on Graphics, 2014 [PDF] [supplementary] [project page] |

|

Modular Flux Transfer: Efficient Rendering of High-Resolution Volumes with Repeated Structures Shuang Zhao, Miloš Hašan, Ravi Ramamoorthi, Kavita Bala SIGGRAPH 2013 [PDF] [video] [project page] |

|

Interactive Albedo Editing in Path-Traced Volumetric Materials Miloš Hašan, Ravi Ramamoorthi ACM Transactions on Graphics, 2013 [PDF] [video] |

|

Combining Global and Local Virtual Lights for Detailed Glossy Illumination Tomáš Davidovič, Jaroslav Křivánek, Miloš Hašan, Philipp Slusallek, Kavita Bala SIGGRAPH Asia 2010 [PDF] [Supplemental PDF] [PPTX] |

|

Physical Reproduction of Materials with Specified Subsurface Scattering Miloš Hašan, Martin Fuchs, Wojciech Matusik, Hanspeter Pfister, Szymon Rusinkiewicz SIGGRAPH 2010 [PDF] [slides] |

|

Virtual Spherical Lights for Many-Light Rendering of Glossy Scenes Miloš Hašan, Jaroslav Křivánek, Bruce Walter, Kavita Bala SIGGRAPH Asia 2009 [PDF] [PPTX] [HLSL shader] |

|

Automatic Bounding of Programmable Shaders for Efficient Global Illumination Edgar Velázquez-Armendáriz, Shuang Zhao, Miloš Hašan, Bruce Walter, Kavita Bala SIGGRAPH Asia 2009 [PDF] |

|

Tensor Clustering for Rendering Many-Light Animations Miloš Hašan, Edgar Velázquez-Armendáriz, Fabio Pellacini, Kavita Bala EGSR 2008 [PDF] [PPT] [video] [comparison video] [diff video] |

|

Matrix Row-Column Sampling for the Many-Light Problem Miloš Hašan, Fabio Pellacini, Kavita Bala SIGGRAPH 2007 [PDF] [PPT] |

|

Direct-to-Indirect Transfer for Cinematic Relighting Miloš Hašan, Fabio Pellacini, Kavita Bala SIGGRAPH 2006 [PDF] [PPT] [video] |

Ph.D. Thesis

|

Matrix Sampling for Global Illumination Miloš Hašan (advised by Kavita Bala) Cornell, August 2009 [link] |